System Requirements

These are minimum requirements for SwarmFS servers in production:

OS | RHEL/CentOS 7 | CentOS 7.4+ SELinux Policy Management:

Edit /etc/selinux/config and set SELINUX=permissive, or map the ports needed by Swarm using semanage-port: semanage port -a -t http_port_t -p tcp 91

semanage port -a -t http_port_t -p tcp 9200

semanage port -a -t http_port_t -p tcp 9300 |

|

|---|

CPU | 2 cores |

|

|---|

RAM | 4 GB (minimum)

| 4GB for a single export Add an extra 4GB for each additional export when using default export memory settings |

|---|

Drive | Dedicated /var/log partition | Provide at least 30 GB for SwarmFS logs |

|---|

The RAM requirements increase with the number of exports, but the greatest impact on CPU and RAM is driven by the number of concurrent client operations. The more concurrent client operations being served, the more RAM and CPU cores needed. How much and how many depends on what the clients are handling (in terms of file/object sizes), so focus on whether the allocated resources are being fully utilized. As they near full utilization, add more.

ImportantVMs - Where write performance is critical, install the SwarmFS server on physical hardware (not as a VM). Paging - Paging negatively impacts performance. If paging out does occur, increase RAM rather than increasing disk space for paging. Debug - When mounting exports directly on the SwarmFS server, do not enable verbose (DEBUG) logging. Shared Writes - Linux UMASK (User file creation MASK) defaults to 0022, so directories are created with the permissions 755. The owner may write to the file. To share file writes among multiple users of the same group, change the permissions on the folder, or set the UMASK to 0002 so it applies to all newly created folders. Add it to /etc/.bashrc or /etc/profile to persist changes across reboots.

|

Preparing Export Configurations

Prepare the export configuration files needed to reference before installing any SwarmFS servers:

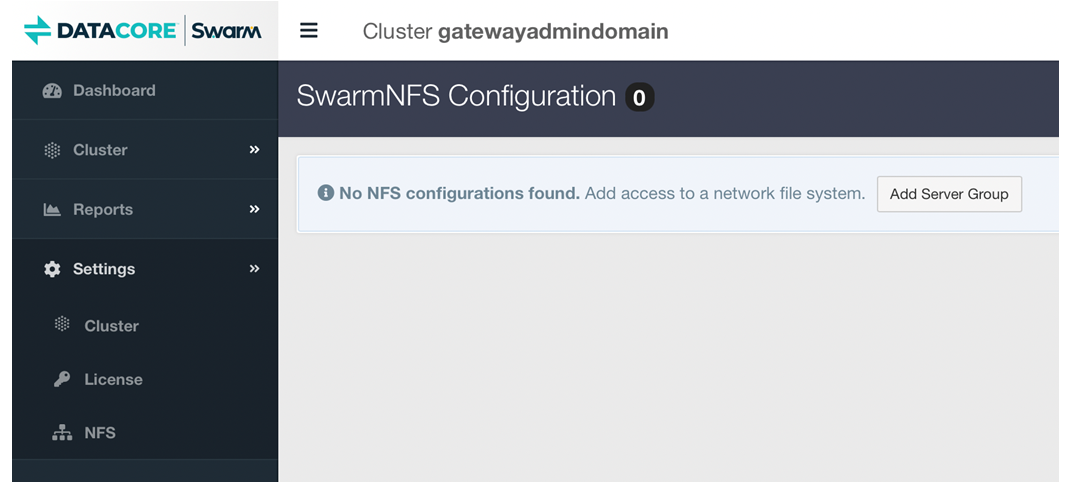

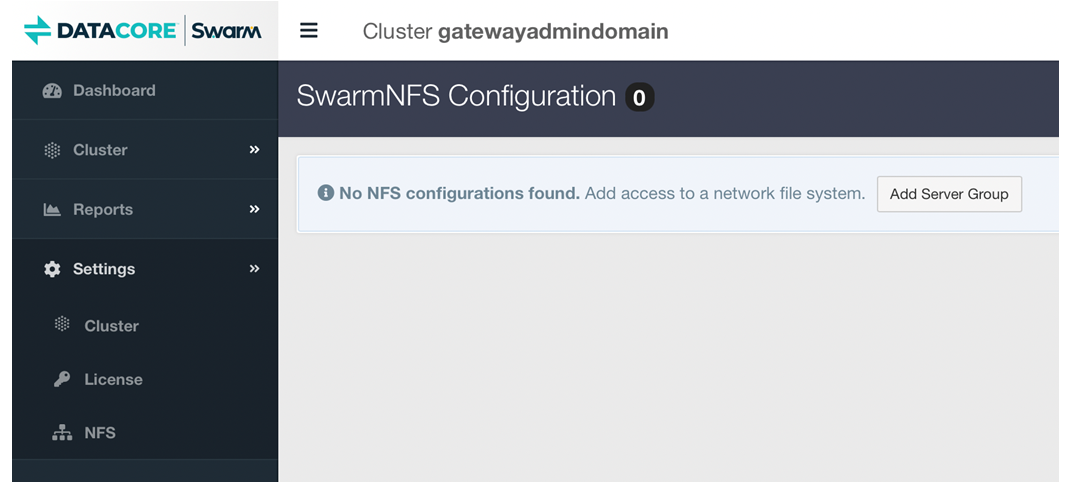

Select Settings > NFS in the Swarm UI.

Define one or more server groups (each group having one export configuration URL to be shared among a set of servers). SeeSwarmFS Export Configuration

Define one or more exports within each group, which become the mount points for applications.

TipThe export URL is non-functional but valid before defining exports, and exports can be added to and updated at any point. |

Installing SwarmFS

The RPM for SwarmFS includes an interactive script for completing the needed SwarmFS configuration for a specific Swarm Storage cluster. The script prompts for the URL of the SwarmFS JSON export configuration created for Swarm Storage, so the NFS exports must be defined via the Swarm UI.

noteNote

The script enables core file generation; it can be disabled via the nfs-ganesha.service file (/usr/lib/systemd/system/) or through the system-wide configuration.

Note

The script enables core file generation; it can be disabled via the nfs-ganesha.service file (/usr/lib/systemd/system/) or through the system-wide configuration.

Run the scripted process on each CentOS 7 system to be a SwarmFS server:

Download the SwarmFS package from the Downloads section on the DataCore Support Portal.

Install the EPEL release, which has the needed packages for NFS:

yum -y install epel-release |

Some later EPEL releases are missing the needed Ganesha and Ganesha utility packages, so install those:

Navigate to the NFS community build service.

Scroll down to the RPMs list and download both packages:

Install both packages:

yum -y install nfs-ganesha-<version>.rpm

yum -y install nfs-ganesha-utils-<version>.rpm |

Install the Swarm RPMs:

yum install caringo-nfs-libs-<version>.rpm

yum install caringo-nfs-<version>.rpm |

Run the SwarmFS configuration shell script that generates the local SwarmFS service configuration, validates the environment, enables the SwarmFS services, and then starts the SwarmFS services.

Login to Swarm UI, and navigate to Settings → NFS.

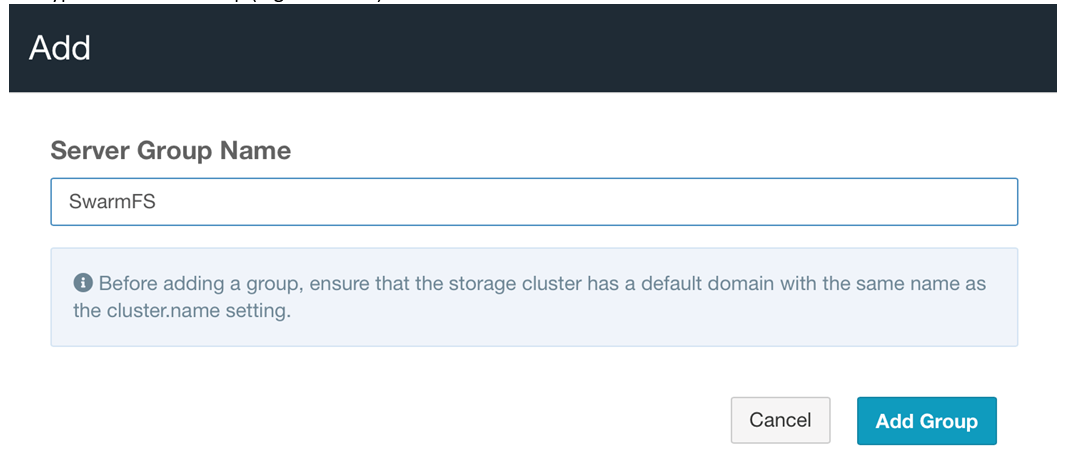

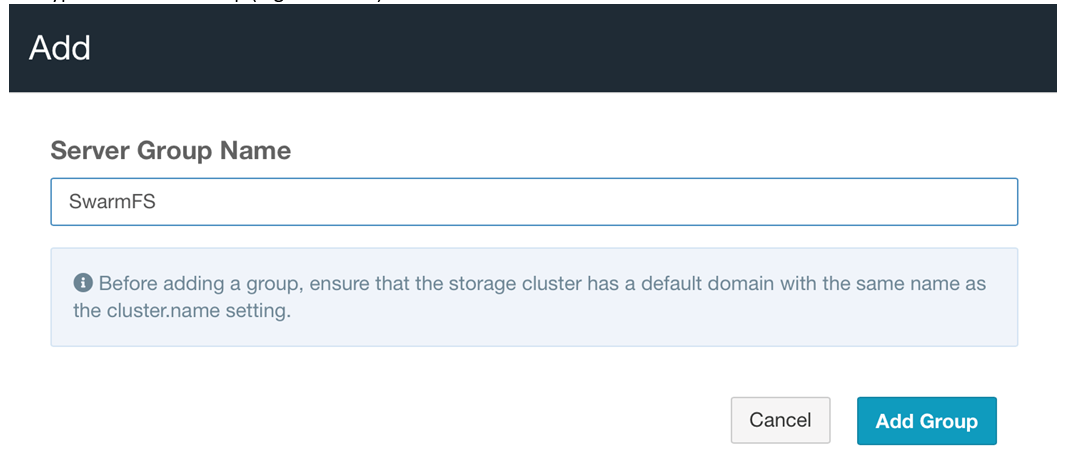

Click Add Server Group to create a new NFS Exports Group.

Provide the name of the group (e.g., SwarmFS) and click Add Group.

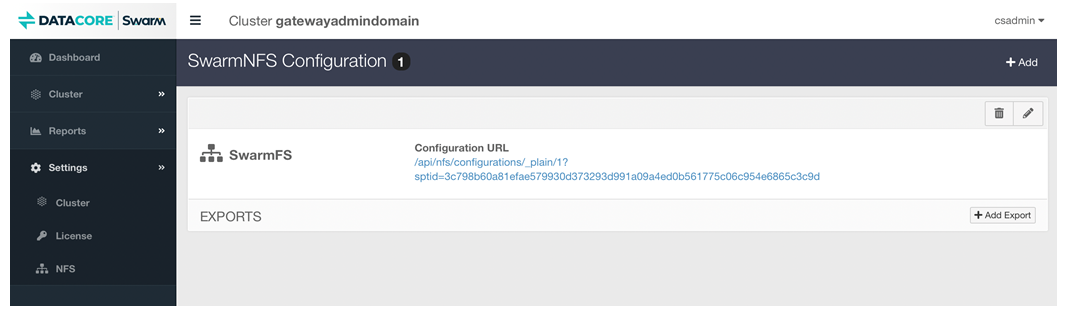

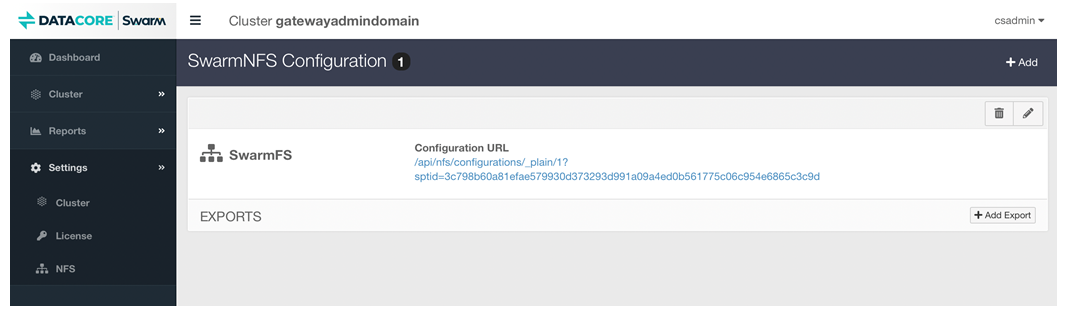

Click the link of the newly created NFS Export Group, to open a new window or tab.

The opened window or tab shows export configure (in JSON format), copy the URL.

Run the script.

SwarmNFS-Config

Open the SwarmNFS-Config wizard.

[root@swarmfs32-01 ~]# SwarmNFS-config

Paste the URL “http://{gateway_ip}:91/api/nfs/configurations/_plain/1?sptid=244ee2c5072d9f0888a13d31d007c6cf37121369ca35636a4b4486c82e1e1e3a”.

Update the URL of nfs-ganesha configuration (/etc/ganesha/ganesha.caringo.conf) with credentials followed by @.

Configuration="http://dcadmin:datacore@{gateway_ip}:91/api/nfs/configurations/_plain/1?sptid=244ee2c5072d9f0888a13d31d007c6cf37121369ca35636a4b4486c82e1e1e3a "

Here the credentials are:

username - dcadmin

password - datacore

Restart nfs-ganesha service.

systemctl restart nfs-ganesha

Enable the service to allow SwarmFS to start automatically on boot:

systemctl enable /usr/lib/systemd/system/nfs-ganesha.service |

Run this command to verify the status of the services:

systemctl status nfs-ganesha |

This status report is comprehensive and includes which processes are running.

TipOn startup, SwarmFS may generate WARN level messages about configuration file parameters. These are harmless and can be ignored. |